|

In a previous article, I’ve shown that it is possible to connect a bluetooth module to an ATMega micro controller via the UART serial interface and even further, discover it using a bluetooth enabled computer or smartphone. The 9600bps serial link established, can than be used to exchange data. Using an Android phone for this purpose is no exception. I will use it to control the Perseus 3 robot platform. What I’m doing is directly interfacing the Android with the ATMega microcontroller via the serial bluetooth link. So you can use this technology for other purposes than robots, since the ATMega has a large range of possible uses: control the light in your home using the Android phone, read various sensors and gather the data on the Android, and more. Keep in mind that the technology presented here can easily be extended: instead of an Android phone you can use any bluetooth enabled programmable device (a Windows Mobile Smartphone, an iPhone, etc) and instead of this robot you can use any other hardware system. The idea is to show how far automation can go. |

Android Software

Using Bluetooth on Android is still problematic, meaning that you have access only to very basic functionality.

The PROs are that they are evolving, and improving, while keeping their platform simple and accessible. So I am confident Android will be in a short time a complete alternative for advanced applications.

To control Bluetooth on Android, there are two possibilities:

1) Rely on BlueZ and Native C code. You can do this either with the Android Tool-chain, or more comfortable, using JNI. Take a moment to read both my articles for a better understanding.

This approach works on all Android platforms.

2) Use the Bluetooth SDK that Google introduced in Android 2.0. It is incomplete, but will probably get better (soon?) and for Serial connections is enough. I will use this approach, but will also provide an example for BlueZ and JNI.

Bluetooth on Android using BlueZ and JNI

First you need to setup your software tools like presented here.

For this example, I will only show how to write C code for the BlueZ stack, and the JNI interface, so that you would be able to do a Bluetooth device discovery. This technique works on all older Android devices.

The interface is using TABs, one for Bluetooth Discovery and another one for controlling the ROBOT. The tabs are created dynamically, as presented in this article.

The discovery results are displayed in a listview. The listview is also created dynamically (no XML), using a simple technique presented here.

From the multitude of Bluetooth devices detected, the Robot is identified based on its Bluetooth Address, in my case 00:1D:43:00:E4:78 .

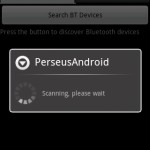

Here are some pictures with the result:

|

|

|

The code, both Java and C JNI is available here:

android bluetooth discovery sample (604KB)

If you want to recompile the Java part, please see this tutorial.

If you want to recompile the JNI C code, you will find this article useful.

The code defines an array of devices (BTDev BTDevs[];), then when the button is pressed, it calls the native (intDiscover) function to populate the array BTDevs, and using it , adds content to the Listview.

Bluetooth on Android using the Bluetooth SDK

To move forward with this project, we will skip the native BlueZ approach and use the Bluetooth SDK introduced with Android 2.0. So the code above is to be modified to do the discovery using the Android Bluetooth SDK. It will only work on Android 2.0 or newer.

So we delete the BTNative class, and of m_BT we will use m_BluetoothAdapter defined as BluetoothAdapter m_BluetoothAdapter;. However we’re still keeping the BTDevs structure, to handle the discovered bluetooth devices.

To do the bluetooth discovery, we define two events:

// Register for broadcasts when a device is discovered

IntentFilter filter = new IntentFilter(BluetoothDevice.ACTION_FOUND);

this.registerReceiver(mReceiver, filter);

// Register for broadcasts when discovery has finished

filter = new IntentFilter(BluetoothAdapter.ACTION_DISCOVERY_FINISHED);

this.registerReceiver(mReceiver, filter);

ACTION_FOUND is sent when a new device is discovered, and ACTION_DISCOVERY_FINISHED when the discovery process is complete.

Pressing the ROBOT entry in the list of the discovered devices, opens a socket for data exchange. The ROBOT sends sensor data and the Android sends commands for moving the ROBOT around, for turning on the lights, the laser pointer, etc.

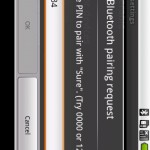

Please note that before attempting to connect a serial bluetooth socket from code to the ROBOT, you will need to manually go to Android’s Bluetooth Settings Panel, and pair the ROBOT Bluetooth device. For my BTModule, the pin code is 1234:

|

|

|

|

Again, this is an unforgivable limitation of the current Android Bluetooth SDK, and prohibits current applications from having full control over the device’s functionality.

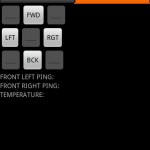

Being connected we are taken automatically to the “Control” TAB. In code we will need to obtain two handlers, one to incoming data, and one for outgoing data to manage the connection.

The “Control” TAB offers an interface that can be used to move the robot. Since Perseus 3 is a differential robot platform, we will need to control each side Forward and Backwards. So to move forward, we will have each side set to FORWARD. To move backwards, both must be set to BACKWARD, and finally to turn around, one side must be set FORWARD and the other BACKWARD. Exactly like in the case of a military tank.

For easier use the interface provides 4 buttons that support all movement.

Download the Android Source code here:

android robot control (736KB)

The code currently only controls the robot. No sensor reading yet. I need to leave something for the next part of this article as well 🙂

The ROBOT Microcontroller software

It’s not enough to write code for the Android. I also had to write code for the ATMega8 controlling the ROBOT. This microcontroller has several functions:

– commanding the HBridges that control the powerful geared motors

– reading data from the sensors (PING Ultrasonic sensors, PIR sensors)

– commanding the robot LIGHTs

– commanding the laser pointer and other instruments

– interfacing the BLuetooth Radio Module via UART (TX/RX/TTL)

So I will try to keep things simple. I need to setup the 9600 baud rate with the Bluetooth Module then listen from commands comming via the Bluetooth serial socket. In this first version the commands are very simple:

character ‘w’ – moves forward, ‘d’- moves right, ‘a’-moves left, and ‘s’ move backward.

int g_nMove = 0, g_nTurn = 0;

void uartRxHandler(unsigned char c)

{

char xs[255] = {0};

sprintf(xs, "[%c]\n\r",c);

uartSendBuffer(xs, strlen(xs));

if (c == 'w') g_nMove = 1;

if (c == 's') g_nMove = -1;

if (c == 'd') g_nTurn = -1;

if (c == 'a') g_nTurn = 1;

}

int main(void)

{

//general index

int i=0;

DDRB |= 0x1E;

LEDInit();

LEDSet(1);

timerInit(); // initialize the timer system

a2dInit(); // initialize analog to digital converter (ADC)

a2dSetPrescaler(ADC_PRESCALE_DIV32); // configure ADC scaling

a2dSetReference(ADC_REFERENCE_AVCC); // configure ADC reference voltage

//uart

uartInit(); // initialize the UART (serial port)

uartSetBaudRate(9600);// set the baud rate of the UART for our debug/reporting output

uartSetRxHandler(uartRxHandler);

uartSendBuffer("Serial on.\n\r",12);

//main loop

while(1)

{

delay_us(1000000);

i++;

if (i>255) i = 0;

LEDSet(i%2); // flash the board led

int nPINGL = a2dConvert8bit(5); // info sensor PING front-left >0 means obstacle detected

int nPINGR = a2dConvert8bit(4); // info sensor PING front-right >0 means obstacle detected

//PORT_ON(PORTB, 1);

//PORT_ON(PORTB, 3);

if (g_nMove == 1)

{

PORT_ON(PORTB, 1);

PORT_ON(PORTB, 3);

delay_us(2600000);

PORT_OFF(PORTB, 1);

PORT_OFF(PORTB, 3);

g_nMove = 0;

}

if (g_nMove == -1)

{

PORT_ON(PORTB, 2);

PORT_ON(PORTB, 4);

delay_us(2600000);

PORT_OFF(PORTB, 2);

PORT_OFF(PORTB, 4);

g_nMove = 0;

}

if (g_nTurn == 1)

{

PORT_ON(PORTB, 1);

PORT_ON(PORTB, 4);

delay_us(1500000);

PORT_OFF(PORTB, 1);

PORT_OFF(PORTB, 4);

g_nTurn = 0;

}

if (g_nTurn == -1)

{

PORT_ON(PORTB, 2);

PORT_ON(PORTB, 3);

delay_us(2000000);

PORT_OFF(PORTB, 2);

PORT_OFF(PORTB, 3);

g_nTurn = 0;

}

}

return 0;

}

Download the complete source code here:

ATMega8 Android ROBOBrain-1

To learn how to compile this code and to program the microcontroller, see this tutorial. As you can see, I’ve explained everything you need to know to achieve this project in a few easy steps.

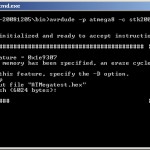

Here’s the Perseus-3 platform during code upload process (connected to my PC):

|

|

About part 2

This article needs to be continued. A better robot platform, better Android software, reading data from sensors (it’s actually easy, but I already wasted one full day for this part only), better ROBOT ATmega software, etc, and not to forget, more pictures and videos.

EDIT: Here are some variants created by my readers

Bryan, from Singapore:, created a very nice Meccano robot that can be controlled with an Android phone:

|

|

Note: Part 2 is available here: Android controlled robot – Part 2

Hi, I,m trying to connect an android device with an wiimote using bluez, I discover the wiimnote but when I do connect it doesnt work and I dont know why, how can I fix it? If you need more info please write me an e-mail.

You want to implement the HID protocol to connect to the wiimote? For that you will need L2CAP connections . What have you done so far?

I,m using wiiuse C libraries with a JNI wrapper to java, y do the find, and I found the wiimote, but when i invoke connect it doesnt work, if yo wanna see the code write me to davi.estivariz@gmail(dot)com. Thanks, sorry for answering so late

David

sorry, the e-mail is david.estivariz@gmail.com, please explain me if I,v to do any previous configuration or I,m not using the correct code.

Thank you

Hello David , I got your email. I’ll look over the code and get back to you . What Android device are you testing this on?

Well, I,m going to explain the whole proble here at the blog. I,m using an Huawei u8110 device for the project, because one of the requirements is that it must be a cheap device. This device uses android 2.1 eclair which is compatible with the Bluez bluetooth stack. I,m using th L2CAP conections which provide Bluez, when I, discovering the wiimote, I get these messages:

E/BluetoothEventLoop.cpp(125):event_filter:Received siganl org.bluez.Adapter.PropertyChanged from /org/bluez/7795/hci0

E/BluetoothEventLoop.cpp(125):event_filter:Received siganl org.bluez.Adapter.DeviceFound from /org/bluez/7795/hci0

E/BluetoothEventLoop.cpp(125):event_filter:Received siganl org.bluez.Adapter.PropertyChanged from /org/bluez/7795/hci0

E/BluetoothEventLoop.cpp(125):stopDiscoveryNative: D-Bus error In StopDiscovery: org.bluez.Error.Failed(Invalid discovery session)

I have used wiiuse libraries compiled for android and wiiusej wrapper, now I,m trying to use another C library, any help would make me happy, thank you

David

With the library which is used in teh Rwiimote project , I found the same problem when I do de connect call, and I get this message:

D-Bus error in GetProperties: org.freedesktop.DBus.Error.UnknownMethod (Method “GetProperties” with signature “” on interface “org.bluez.Device” doesnt exist

Thank you

David

Hello David,

Could you try my code and see if that works for you? It is available here:

http://www.pocketmagic.net/wp-content/uploads/2010/10/android-bluetooth-discovery-native-code-jni.zip

Hi Radu, know I can,t try it because a left work site and I won,t get home until this night, tomorrow morning I will prove it. Thank you

David

Hi Radu, I have tried this code and I discover the wiimote, but I dont know if this code also connects it or not.

David

Hey,

This is awesome. I have been trying like that function for a few months and till not I only finish GUI and button. You are genius!

Thanks,

Bryan

Hi Bryan, are you building a robot?

Yup. I want to built one but progress is very slow. Your java programming skill must be pretty good.

Thank you, Bryan, I’m a software developer, programming is what I do. But the hard part is the hardware, since you need to build custom components, you might need a lot of tools.

I am Mechatronics guy. So, hardware is not a problem for me but I have problem with high level programming. Thanks for your code!

hi,

i have to connect android phone with windows XP VIA Blue-tooth. Please suggest me a specific direction. Thanks in advance

hi,

i have to connect android phone with windows XP VIA Blue-tooth. Please suggest me a specific direction. if i press a button windows Blue-tooth should receive a Signal. what i have to do in my project i have to use android phone as a joystick for racing game. Please help me in this regard and give a right direction.

@Bryan, that’s great, if there’s anything I can help you with, ask.

@Kasuri, what is the purpose of this connection, what data do you want to exchange?

Hi, we are trying to send signals to a wheelchair to control with our microcontroller…it is a school project and i was wondering if we could use your code in our project. the project is due next week.

we were trying to make this work with iphone but the limited connectivity with the bluetooth made us change the platform to android at the last minute.

Any help is greatly appreciated. Thank you so much.

Hello Sakshi,

Yes, but please indicate the source (this article) .

If you need help on this, just ask.

Radu

Hi Radu Motisan,

Finally, I built one with your great help and inspiration.

http://sintec-hobby.com/

Thanks,

Bryan

Bryan that looks great! Congratulations on your project.

I would like to post some of your pictures and a link-back here, is that ok with you?

sure, Radu! Thanks!

how about connect robot via android wifi ?????

@voiox: What about it?

Hi Radu Motisan,

First of all, hats off to your article.

Now my doubt,

I am working on Android 2.1.

Google says that Android supports A2DP profile since Android 1.5( donut) but the public APIs for A2DP Bluetooth profile are available only after API 11.

Does that mean that I cannot make my own app which can transfer streaming audio between two devices that support A2DP profile if I am using API level <11?

If that is true, can android ndk help me in this matter?

Thanks,

Pulkit

hi all …

can explain to connect android wifi with robot..

thanks

Hi!

Could anybody help me in my project? I’m trying to connect to a bluetooth device using MAC address from my Samsung Galaxy S (SDK 2.1, rooted) using native code, but I didn’t manage to do it so far using this C code:

jint Java_com_bluetooth_RSSI_connectbt(JNIEnv* env, jobject this, jstring mac)

{

int s, status;

int dd;

int i;

struct sockaddr_rc addr = { 0 };

struct hci_conn_info_req *cr;

char * dest;// = “00:1E:E1:7D:54:B4”;

struct timeval timeout;

dest = (*env)->GetStringUTFChars(env, mac, 0);

__android_log_print(ANDROID_LOG_INFO, TAG, “rfcomm %s”, dest);

// allocate a socket

s = socket(AF_BLUETOOTH, SOCK_STREAM, BTPROTO_RFCOMM);

// set the connection parameters (who to connect to)

addr.rc_family = AF_BLUETOOTH;

addr.rc_channel = (uint8_t) 1;

str2ba( dest, &addr.rc_bdaddr );

// connect to server

__android_log_print(ANDROID_LOG_INFO, TAG, ” Trying to connect”);

//setsockopt(s, SOL_SOCKET, SO_SNDTIMEO, &timeout, sizeof(struct timeval));

status = connect(s, (struct sockaddr *)&addr, sizeof(addr));

if (status == 0){

__android_log_print(ANDROID_LOG_INFO, TAG, ” CONNECTED!!!”);

return s;

}

__android_log_print(ANDROID_LOG_INFO, TAG, ” no connect”);

return -1;

}

I get every time in the log:

04-17 11:08:11.524: INFO/native_service(7973): Trying to connect

04-17 11:08:11.524: INFO/native_service(7973): no connect

Does anyone have any idea about the possible problems? I’m a little desperate here, as I couldn’t move on with my project for 2 weeks now, and I have to finish it soon 🙁

Peter (goczanpeter@gmail.com)

Szervusz Peter, hogy vagy?

The approach you’ve used is wrong. When initing a bluetooth conneciton, you need to make sure:

1. the server (identified by the MAC address) is up and listening for an incoming connection

2. the client tries to open a connection on the protocol expected by the server (similar to TCP/ip)

3. the client tries to use a service exported by the server (similar to TCP/IP ports).

In my sample, android-control-robot-via-bluetooth1.zip, you can see the Connect function. I’m opening RFComm socket (as you tried), but I’m explicitly indicating the SPP_UUID service, because my server (the Bluetooth Chip on the robot), expects an incoming bluetooth serial connection.

So please check all these issues and try again.

sok szerencsét!

Szia Radu, jól vagyok, köszönöm, csak kicsit már frusztrál, hogy már jó ideje nem tudom ezt megoldani, és 10 nap múlva le kell adnom a diplomamunkámat. És te? 🙂

Thanks for your fast answer, but I have a few questions about it.

You said: the server (identified by the MAC address) is up and listening for an incoming connection. Does this mean, that I have to implement some code on the target device as well? Isn’t it possible to write a code that works with (almost) any bluetooth devices?

I’ve checked your code’s connection part. Do I get it well, that you use SDK to connect, and native codes only in other functions?

Well, my project is about getting the connection’s link quality value, so I want to use “int hci_read_link_quality(int dd, uint16_t handle, uint8_t *link_quality, int to)” included in hci.c. Do you think it’s possible to connect to a device using SDK, than get the parameters to this function, and than use it via JNI?

I tried to do it this way, but then I could not get the handle parameter(however I got a function that should do that).

Sorry for asking so much, this is my first android project, and I have only 10 days to finish it, and you are the first one who is able to help me and takes the time to do it.

If yo could take a look at my code(it is pretty short), send me an e-mail please.

Best

Peter

I’m doing well, thank you for asking – don’t worry about the project – it will come to a result eventually.

Yes there must be a server to connect to. Yes you need to write and run code on the server side. It is not possible to initiate a connection if the sever is not listening. This is why your code fails.

After you get a successful connection, you might be able to get the RSSI, but only with JNI.

Yes, I used the Android SDK for the client, a very basic Bluetooth interface has been added in Android 2.0, and it supports the serial port profile – meaning you can open a bluetooth socket and then exchange data like with a serial port.

This protocol is exactly what I need to connect to my robot. My robot uses a microcontroller and a bluetooth chip, that has a firmware and already implements a bluetooth server listening for an incoming bluetooth connection with th eserial port profile. So I can do that on my android phone.

For the RSSI you will need JNI, but first make sure you implement the connection correctly (specifying the service profile used – eg. serial port) and that you have a server.

With JNI it is possible (on some devices) to also implement other profiles, such as HID (using L2CAP connections).

Let me know if you have other questions.

Good luck!

Radu

Hi!

These were very useful informations, thank you VERY much.

When I tried to connect to the target device using SDK after pairing the devices(and setting “automatically accept connections from this device” on the server side), the connection was succesful without using any code on the server side, and the status stayed “connected” until I cancelled connection, but using this C code, I could not make the connection(becouse of the reasons you wrote I think). Is it possible, that if I use SDK and manage to reach a connection, than I should get RSSI without a code on the target device, or that way I have to implement a listener on it as well(I tried that, after connecting, getting RSSI failed, so I think it won’t work this way)?

Will the target device not listen automatically after a succesful connection(when my phone appears as “active” in target device’s connections list)?

Cheers

Peter

And by the way do you think it’s possible, to listen somehow with a computer using Ubuntu, and connect to that from my android phone using SDK’s connect, and measuer the RSSI between the phone and the computer’s bluetooth adapter? (this way I shouldn’t need two phones to test the application)

If I implement a listener on an other android phone, that phone shouldn’t have to be rooted, right?

Do you think this code should do the work?

http://code.google.com/p/android-bluetooth/source/browse/branches/two_dot_o/AndroidBluetoothLibrary/src/it/gerdavax/easybluetooth/ConnectionListener.java?r=67

or this:

http://www.devdaily.com/java/jwarehouse/android/core/java/android/bluetooth/BluetoothServerSocket.java.shtml

And really: Thanks for your help A LOT!

Peter

Hi!

I was able to connect to an other android device running the same program on each devices and wrinting a listener interface into my code to the server side, but after that I got stuck a little.

For being able to get the rssi value I need to use

int hci_read_rssi(int dd, uint16_t handle, int8_t *rssi, int to), where I think “dd” is the number of the socket the connection lives on. I’ve got a code that gives me the handle parameter:

jint Java_com_bluetooth_Lq_getinfobt(JNIEnv* env, jobject this, jstring mac, int dd)

{

struct sockaddr_rc addr = { 0 };

struct hci_conn_info_req *cr;

char * dest;

bdaddr_t bdaddr;

dest = (*env)->GetStringUTFChars(env, mac, 0);

cr = malloc(sizeof(*cr) + sizeof(struct hci_conn_info));

if (!cr) {

__android_log_print(ANDROID_LOG_INFO, TAGE, ” Can’t allocate memory”);

}

__android_log_print(ANDROID_LOG_INFO, TAG, ” /Getinfo/ dd = %d”, dd);

str2ba(dest, &cr->bdaddr);

cr->type = ACL_LINK;

if (ioctl(dd, HCIGETCONNINFO, (unsigned long) cr) conn_info->handle;

}

but this needs the dd parameter as well, that I don’t know how to get after connecting the 2 devices.

I’ve got a method written for getting rssi as well:

jint Java_com_bluetooth_Lq_rssibt(JNIEnv* env, jobject this, int handle, jstring mac, int dd)

{

int8_t rssi;

int i;

int dev_id;

char * dest;

bdaddr_t bdaddr;

dest = (*env)->GetStringUTFChars(env, mac, 0);

__android_log_print(ANDROID_LOG_INFO, TAG, ” /Getrssi/ dd = %d”, dd);

if (hci_read_rssi(dd, htobs(handle), &rssi, 1) < 0) {

__android_log_print(ANDROID_LOG_INFO, TAGE, " Read RSSI failed");

return -100;

}

return rssi;

}

so based on this, all I need to know is the "dd" parameter, and using that I shell get the handle parameter, and after, I hope I'll finally get rssi value.

For getting dd I also have a native function:

jint Java_com_bluetooth_Lq_gethcisockbt(JNIEnv* env, jobject this, int old)

{

int dev_id, dd;

hci_close_dev(old);

dev_id = hci_get_route(NULL);

dd = hci_open_dev(dev_id);

__android_log_print(ANDROID_LOG_INFO, TAGE, " dd = %d | old = %d", dd, old);

return dd;

}

But I don't think this would return the socket number I need after building the connection.

Do you have any advices, how to get the dd parameter?

Do you think this should work this way?

Thanks in advice,

Peter

Hi! First… Congratulations!

I’m a Eletric Engineer and i did a project with an Atmega and a bluetooh module, but the communication was made trough a PC with windows 7. My question is: In Windows i had to set up the baud rate in the code to connect the device in 9600 bps trough the virtual serial port. The android app don’t need this? If need, how did you do this?

Thanks,

Rogerio

@Peter, yes this might work, but I never tried it so can’t say for sure. I got the RSSI on a windows mobile device, after the connection has been established.

@Rogerio, thanks, indeed the windows api explicitly allows you to setup the baud rate, also the UART Bluetooth Chip is set for 9600bps (and I think it can go even higher with firmware modifications), regarding Android here’s what the SDK says: http://developer.android.com/reference/android/bluetooth/BluetoothDevice.html#createRfcommSocketToServiceRecord%28java.util.UUID%29

then see this: http://stackoverflow.com/questions/5576237/android-bluetooth-serial-rfcomm-spp-how-to-change-the-baud-rate

@Radu, good link suggestion.

I’m thinking to implement an Android App to my project. But are future plans.

Best regards,

Rogerio.

By watching all these comments… i get interested in Android programming.. @ Radu sir.. can u suggest me a simple language which i could learn quickly.. [i don’t knw C language / C++].

reply soon..

Hi kiranreddy, you can start learning Java, see: http://www.pocketmagic.net/?s=android

Hi!

Finally I managed to finish my project, and I just wanted to thank you the help and support you’ve provided:)

Peter

@Radu sir.. is java is supporting to android app. Sir. can u suggest me other easy language for Android programming. hmmm can u give me Perl beginners programming for android.. 🙂

thank u.. (n_n)

@Peter, that sounds great, congrats! How did you manage to overcome the previous obstacles?

@kiranreddy: for Android you’ll need Java

Hi Radu,

I am in the planning stages of a project. A hexapod robot, with 18 tower pro sg5010 servos for the legs, pololu 24 channel servo controller, adruino uno with ATmega328 microcontroller, and a bluetooth module. I plan to control the hexapod with an android phone through bluetooth.

Do you know if it will be possible to control it with the android phone, or would it be too complex as it will have to command 18 servos?

Also would any of your code be useful to me if I modify it?

Thanks in advance,

David

mr. RAdu hellp me please..!! can i control robot with wifi ?

if that work !!!!

do you have turial for that

thank you before

Hi,

I found out that I did not need a Client and a Server side of the software, nor an UUID, so using native codes I managed to write a program that is able to connect to any bluetooth device, but only if they are paired, only with Android 2.2 Froyo os and only with a bluetooth chip 2.0 or 2.1. The phone needs to be rooted too, and I know there are too many “only if”-s, but still, it works 🙂

Hi Peter, this sounds intriguing, to say the least. Can you share more details on your approach?

Hi, well, using bluez stack and lots of help of a russian developer I could implement a function to get a free bluetooth socket to connect, than, using this socket got some parameters with an other function to be able to use hci_read_link_quality(), and after getting the desired value I had to disconnect from the device with a disconnect function similar to bluez stack’s implementation. All written in C and built using android NDK. If you would like to see the code, send me a mail and I’ll send it to you 😉

Hello

I am new to android. i am working on a project in which i have to connect android’s BT to Bluetooth Module. I am able to discover the devices but i don’t know how to send and receive data. i mean i am unable to set server and client code…can you please help me out i shall be very thankful to you. my email is syeds89@hotmail.com & shan72ca@yahoo.com

Hi,

I have tried this code. The bluetooth device gets found, but cannot connect to the device! I get

: DEBUG/PerseusAndroid(2408): StartReadThread: disconnected

: DEBUG/PerseusAndroid(2408): java.io.IOException: socket closed

Why is that?

Thanks.

Hi I’m wondering if you could give me some advice regarding Bluetooth on Android.

I saw your post on stackoverflow and followed your profiles link to this page and saw your robot.

I want to do bluetooth communication and have my own UUID. I want to discover other BT devices and find their Service Records. Each device will be a host and have a listening serversocket. I want to discover other hosts and know if they are hosting the same service or if the device is not running the specific service. Do you think this is possible with the Android SDK version 8 or will I need to use the NDK you described in this post?

Alex, you can use the Android SDK, actually you should use it to avoid compatibility issues, but you will be limited to the serial port profile (SPP) and the corresponding UID.

So you should open listening sockets, and when a client connects, send a little data package to it, so it would know it is your custom service.

Hope this works for you. NDK approach gives better control, including SDP, but it will work only on a few devices.

Hello Radu,

thanks for the fast response. RFCOMM sockets are the way to go for me anyways since I am porting an application from J2ME which has the functionality to scan for devices and query if my services are provided. I will want to do communication between the two platforms so I will probably have to change something on the j2me platform side to conform to the changes necessary on Android.

I have one more question: do you know if it is possible to make it so the menu, that asks if it is ok to have the phone discoverable for 300 seconds, not show up?

I had this problem myself, unfortunately I don’t think it is possible to get the phone discoverable without that standard popup.

Hi Radu,

Where i can get C code for bluetooth – atmega example?

by

http://www.pocketmagic.net/wp-content/uploads/2010/11/ATMega8-Android-ROBOBrain-11.zip

Thanks Radu!

I downloaded it, compiled it, and ran it to see if I could use it to connect to a different device.

I changed the final static String ROBO_BTADDR = “00:1D:43:00:E4:78” to use the address of the other devices (I tried several) but each time when it gets to the m_btSck.connect() line it throws an IOException Service Discovery Failed.

Any idea why? There isn’t anything special about the UUID you supply it when you create the device in this line is there?

m_btSck = ROBOBTDev.createRfcommSocketToServiceRecord(SPP_UUID);

Note that I stepped through the code and the m_btSck looks good in Eclipse. That is to say it seems to be a valid socket.

Any idea at all what is going on? I just am trying to connect. Once I get that far, I’ll be OK but I cannot seem to connect to any Bluetooth devices at all and I have tried several now (Free2move dongle, my PC with a USB Bluetooth adapter, and my Garmin Nuvi).

Thanks!

Hi there

Thanks a lot for the code and the good documentation about it. It helped me a lot to continue the project I was working on.

I have used your code as the base code to connect Wii balance board to the Android and have written an app for Android which can connect to wii balance board and use it as a scale. This is part of a research project which I am involved in University of Saskatchewan. I plan to work on this a little bit more and when it is stable enough, publish it as a open-source freeware.

By now I have finished most of the implementation, but I have done no deployment. I wanted to ask your permission on this and see whether you are ok with this or not. It would be great if you get back to me (preferably by email) and let me know about it.

Cheers,

M.

Hello Mohammad, feel free to use and include my code in your freeware app, just provide a link to my name/this article in your application’s about file.

i just want to do this project as my final year project.but they are asking for some application of the robot. and they r asking wat is the reason to use the android phones.can u pls help me by adding some application to it

We at ABB, are giving away RobotStudio – Industrial Robot simulation software free for 1 year.

Know more at http://facebook.com/robotstudio

Lets join hands to build a better world with automation & increased productivity,

/Lets build the tomorrow./

Hi need urgent help

I am making similar kind of project to control home appliances through bluetooth… please guide me how to program which will sent signals to microcontroller which tells to turn on/off the appliances.

please help!!!

Salman, the article already contains everything you need to know, why don’t you read it carefully?

Here is the direct link: http://www.pocketmagic.net/?p=924

Hi

Is it possible to sent at commands from android application ? ….

i want to turn on/off the appliances remotely for that i want to send a sms to GSM module which can be possible via at commands

so wanted to ask that does android support AT command and if yes then tell me the solution how to use it.

Need urgent help..

Hi, Is it possible to contract your services for a firm that is looking to create something similar to what you have just done? If so please email me your contact number.

Hi, i wanted to ask some questions please respond as soon as possible

1) Do we have to program the bluetooth module for accepting an incoming connections ?

2) Currently i do not have Android phone so how to test the SDK bluetooth Chat application on Android emulator ? Please help i am stucked and wanted to complete my project

1) No, the BT Module is preprogrammed and exposes the SPP profile.

2) The emulator doesn’t support physical BT Connections. I suggest you use your computer if it has bluetooth, or simply connect a USB BT Dongle, available for little money on Ebay. SPP is very easy to use.

Hope this helps,

Thx for the reply Radu

1) I dont have android phone so how to check that bluetooth application is working Or not?

2) I applied your tabs code in Eclipse when i run in emulator it says for some error ? Isnt there any way to test android applications on emulator ???

1) Write a program for your PC. Put a USB Bluetooth dongle in your PC.

2) Check the error and try to fix it. You can used the emulator to test your Android code, but not bluetooth functionality, since the emulator cannot produce radio waves and communicate via bluetooth.

@Radu

I managed to test Bluetooth Android Application On Emulator :)… Installing Emulator oN Virual Machine Android Emulator ALlows us to Test Bluetooth Application

Hey, i was wondering if this could be used to control an android tablet with an android phone…emulator controller maybe???? i dont know much about coding but i have been looking everywhere for info and no one has apparently thought of this. thanks

@jordan, it can be done, but what purpose would it serve?

Hey, how do i get the data in android application from bluetooth module ?

For Example: my bluetooth module is connected with MicroController which is holding the status of the appliacnes.

So when i connect my application with the bluetooth module the application automatically shows the status of appliances connected to the controller.

Salman, it is very easy. I didn’t implement that in my sample above – it only sends data from Android to uC, but to do what you want, you only need to call “uartSendBuffer” in the uC code to send data over the bluetooth serial connection.

Hey if i am connecting my Android application with the laptop having bluetooth in it.. so how to through the data to the laptop ? is there any way to test by sending the data from application to laptop ?

I have no idea about this plz help

How to connect android application with the PC having bluetooth and test the data in the hyper terminal ??? please guide me .

Hi,

I’m trying to use the JNI approach from your code to discover the BT enabled devices and to communicate with the selected, but the discover fails. When logging where does it fail, I found out that that function “hci_devid” returns (-1), and I can’t valid ‘dev_id’. Since, this is the starting point, I can’t go on further with my investigation.

I use Android Galaxy S phone with BT v3.0, and my Android application need to communicate with a sensor and receive and process data from the it (the sensor has BT v1.1). After I will make this work, I need to investigate the usage of the HCI, since I need to configure some BT parameters on the phone.

Can you help me how can I make this project work for me? Just as an info, if I use the Android SDK, it works, but not with JNI approach 🙁

Thanks in advance.

hi Radu

Need ur help .Wanted to buy a bt module .Problem is which one to buy .here are some online shops i am easily accessible to

is there something i should consider while buying any one of the bt modules .plz help

I got mine from ebay, a simple UART BT Module

hey radu

if i wanted to send data from Uc to android then do i hv to code in the android code which u have posted ??? and if yes then what i hv to do to get the input data from Uc to android

@Salman , the complete scenario would be: uC connected to BT Chip via UART , and BT Chip connected to the Android Via SPP over BT. Now you can do the following:

– on the uC, use uartSendBuffer to send data to UART, this will get to the BT chip and will be send to the android phone

– on the Android phone, simply read from the m_btSck socket

actually wat i want to do is tht Uc will send the status for Eg (ABC) to BT via UART now how do i set this status in my Android application ?

You will need to train in Java and Android programming. It is easy, but better start with simpler projects.

i knw java programming. thng is that u can give me idea that can i use m_btSck.getInputStream().read() to get the data which is coming from BT

Hi Salman, you can either try converting your inputstream to new DataInputStream(InputStream) and do reading on that or

InputStream inputstream = m_btSck.getInputStream();

final int buffersize = 512;

byte[] buffer = new byte[buffersize];

int bytes = 0;

while(bytes != -1){

bytes = inputstream.read(buffer,0,buffer.length);

// do something with the bytes like appending them to a string or something

}

check bytes to see if it was filled and if not, read more, otherwise use those bytes you got in buffer

Thx Alex i did in this way but didnt tested yet.. I am on the right track alexx?

ByteArrayOutputStream buffer1 = new ByteArrayOutputStream();

InputStream inputstream = m_btSck.getInputStream();

final int buffersize = 512;

byte[] buffer = new byte[buffersize];

int bytes = 0;

while(bytes != -1)

{

bytes = inputstream.read(buffer,0,buffer.length);

buffer1.write(buffer,0,bytes);

}

String str1 = new String(buffer1.toByteArray());

Toast.makeText(getBaseContext(), “”+str1+””, Toast.LENGTH_LONG).show();

thx for the code worked fine on android 2.2 .but after an firmware upgrade from samsung mobile (android 2.3.6)

not working any suggestion(like the program runs on the mobile but dosent discover the muc )

Hi Radu

in ur android code u have set m_btSck.getOutputStream().write(‘w’); and it is sending w to the controller. but i want to send a string R1N so how it can be done ?

Ryt now i hv done in this way but its not working

byte[] byteArray = new byte[] {82,49,78};

m_btSck.getOutputStream().write(byteArray);

String value = new String(byteArray);

and the output of value is R1N

but this technique seems to be not working for me .. any help ?

@Salman, Hi! , try

String szCmd=”R1N”;

byte[] lpCmd = szCmd.getBytes();

m_btSck.getOutputStream().write(lpCmd);

What does R1N mean?

its the key word which i hv to sent to my AT89C52 Controller.

i tried this as well but it will send @Babczs this type of thing to the controller…

any other idea ?

hi radu need your help

i hv prepared my android application and its sending data to hyperterminal.

i hv at89s52 controller. i wanted to program this on BASCOM. i dint find any information abt tht can u help me how to program for bluetooth for Uc in bascom or it can be on C as well plz its urgent

hi Radu:)

i have some doubt in my project .. i want to exchnage SMS between two emulator but i implimented SMS and was not able to establish a separate connection between another emulator can u send me code for this .. i really need u help please.. jan28/2o12 is my final demo please please send code to my mail id

“manju.shirageri@gmail.com”

thanku 🙂

Hello Radu!! I am a big fan of yours!

We are having a mini project in our college and our idea is to control a car via Android using Bluetooth..

I am an amateur in programming the app… can u please give me suggestions as to how should i proceed?

I’l be really thankful if you could help in just guiding me!

Thanks 🙂

hi manjunath, what is the current status?

Hey Guarav. You can start with this article, I posted all the source code needed here. It allows you to control the robot like you’ve seen in the video

Hi Radu ,

i am new to work in android and i am trying to develop an android application to transfer data from an android application mobile to pc or mobile…..i am able to scan devices but could not send data from…..can u please provideme the code for this….

Its my id-sandeshk779@gmail.com

please help me….

Thanks in advance…….

Hi all this is works for me .

main problems:

check your Andriod version:

—————————

Intstall Andriod application with Eclipse .

Go to Project Properties and assign Andriod 2.2

Clear all errors like Overrride annotations in your code.

Check your bluetooth Mac address.

Replace with ROBO_BTADDR: in persiousAndriod.java

so after clear all errors ,Export application as Andriod and give Saving path.

Detecting Bluettoth devices:

—————————-

After install this application in moblile .

Check bluetooh devices.

check both Bluetooth id is equal to (ROBO_BTADDR: 00:00:AA:—) in persiousAndriod.java

id both is fine it shows .

finally configure your robot ,its works like charm.

I need to connect multiple detection circuits to microcontroller.and need to display a message/any indication on my android by using wi fi. can you explain me briefly what i need to do…?

hai,,i try your code android in eclipse helios,,but i hav much error,,what program u use to comile your program android?

I want to control this robot with my Nokia N8. How can i do it? Can u explain my with the codes irfanmeerza007gmail.com

Hi Radu,

I make a robotic device that is controlled through Bluetooth. I wrote and Android app for this purpose (http://www.thegadgetworks.com/TL-PlusDownload.html) and developed and tested it on the Nexus One. But I came to discover that there are some other phones that will not find the robot, such as Droid Incredible and Samsung Galaxy S. The problem seems to be in discovery, as these phones apparently skip any device with major device class of 0 (MISC). The BT device in the robot is a simple serial transceiver using SPP. On these phones, the robot does not even appear in the pairing list when attempting to pair using the Bluetooth Settings dialog. What do you think is the best solution to this problem? Obviously the app can not include hard-coded MAC addresses.

Hi Don, nice project you’re working on.

I’d like to help, but I have not a clue on a possible solution. I will think on it, and let you know if I get any ideas

hi radu

i like your project, i am going to make my final project using android to control a robot with ATMega 16, my problem is, i don’t know how to the start this project

may be you can give some advices and tell me what things that i need to do this project

i never learn about android before, and i dont know how to create a android program

now i start to learn all about android

i’ll be grateful with your help

thanks

Hello faadhil ,

Best thing to do is to start with the code I’ve posted. I do have easy tutorials on how to start with Android platform, see the rest of my blog.

Ok thanks radu, i’ll try

hi Radu….i have a question..

whether there is an algorithm or method in the Android application? if they were present, what algorithm or method is used? such as fuzzy logic or other.

thanks before..

Hi Radu,

many thanks for this great tutorial. I’m having some troubles launching the jni native sample on my galaxy s2 (android 2.3.5 not rooted). Is it supposed to work?

I have simply imported your project inside eclipse and launched it, do I have to do something (for example recompile the c part)?

Thanks in advance

@Afta, there is no such algorithm yet.

@Franco, no need to open the JNI folder. Simply import the JAVA project in Eclipse. No need to recompiled the JNI/C part either.

Hi,

Iam doing project on controlling home appliances using android mobiles via Bluetooth.Whether any specified mobile i need to use for this.(somebody said ,for Bluetooth communication we need to get android mobiles which will have serial interface facility )

Expecting Ur reply.

a regular android with bluetooth will do. What you need to do is:

Android—-(over BT)—> BT Module —(via UART)—> Microcontroller —–> Relays and other home appliances.

Hi radu,

Thank you very much for ur reply.you says that all android mobiles will support with bluetooth module.am i correct

.

Hi radu,

i have finished all the parts except the communication between android and Bluetooth

module.Which android mobile will be easier to communicate can u suggest some mobiles.

thanks in advance..

very happy to see ur reply.

Hi Radu, thank you very much for your reply .

please can you give some help in getting the jni sample working?

for example if it requires a specif version of android,a specific version of bluez and so on.

Which is the configuration of the device used as test? thanks in advance

@Gowtham and @franco

if you are using JNI, You can use the nexus one, the original G1, motorola xoom, etc.

But it would be better to use “Bluetooth on Android using the Bluetooth SDK”, so without JNI,that is unfortunatelly not supported by all devices. The source code to that is also available in my article, direct link here: http://www.pocketmagic.net/wp-content/uploads/2010/11/android-control-robot-via-bluetooth1.zip

Compile it in Eclipse directly.

This version, without JNI, will work on almost all the Android devices with OS 2.0 or greater.

Hi radu,

We will check and then come back to you.

Thanks for your reply.

hi radu,

Whether serial Bluetooth will support with all android mobiles.

@Gowtham, yes serial Bluetooth (SPP) works on all android devs starting from OS 2.0

how about via wifi? thanks

wifi is also possible.

could you please give me some hints, clues, or even simple sample program to do it? It’s gonna be a massive step in my thesis. I’m sorry if I’m ask too much, but I’m really desperate about this, and it’s nearly deadline of my thesis.

Hope u’r well..regards, Lucky Silva

Hi..!!

Can help me to making a light control using the android bluetooth bluetooth devices connected to other devices. please share to me tutorial and coding at email : joniq.onex@gmail.com.

expect the response and thanks ………!

hi,

Nice Tutorial!! 🙂

I’m a newbie in android programming.. Can anyone tell me how to connect to scanned devices via android Bluetooth?

I’ve tried the developer.android.com. Please help..

Thanks in advance

Can help me too to making a light/LED control using the android bluetooth bluetooth devices connected to other devices. please share to me tutorial and coding at email : joniq.onex@gmail.com.

expect the response and thanks ………!

hai..im using this code to moving my multipurpose android robot..it work perfectly..can anyone help to about how to read sensor part..i done create third tabs that can display the value of sensor..until now,i still cannot get the value of data..hopefully you can help me to solve this problem..i can send my source code for you to check..you can email me at : affandi37@gmail.com

Thank you very much..

Appreciate this post. Let me try it out.

hello friend yhis project possible with wifi , yes then how????

Not only possible, but easy!

good one is run avr16 with RN42 bluetoth module

nintendo wii u står den djärve bi rofl 🙂

Radu, It looks like your program is just what I need to control my own Arduino bluetooth project to let my boy run his trains. I could program when Fortran was cool and Microsoft only did DOS, and hardware is not a problem for me. However, I can’t figure out how to install your program on my wife’s smart phone. Everyone else seems to have no problem, but I seem to be missing the obvious. It appears that the download file must be compiled on a PC then transfered to the phone…but I’m guessing. Can you help me understand more basically how to download and install the program?

Hi Jack,

Technology is moving fast, isn’t it?

To install the android software, please download the Control software. Direct link is: http://www.pocketmagic.net/wp-content/uploads/2010/11/android-control-robot-via-bluetooth1.zip

From this archieve, extract PerseusAndroid\bin\PerseusAndroid.apk

Take the apk and put it on your phone’s SDCard. The use a file explorer software, to browse to it and with a tap you should be able to install it.

Thank you. This was a big help. I found the file. Funny that you mention the pace of change…since my wife traded her Samsung phone in today on an iPhone…so I am stuck looking for a friend now that has an Android driven phone to try this on. I was so close… By the way, I like what you did with the robot. Keep tinkering. The kids will love it.

THanks RADU MOTISAN.this is quite good…

i want to make bluetooth receiver to open the switch.

when user send a file(using any cell phone without app), it opens the switch.

i wanna to use it in door lock(so it should ask user to enter correct code to pair with it)

i need a microprocessor code and which type of processor is used for it.

glad this helps.

i read your all comments but can’t find solution for my problem

after connecting bluetooth module with microprocessor.next step is to write code in flash memory.

i want that code

when user connect with this using any cell phone(either android or classic) it ask for paring,after successfull paring it send automatic command to relay to open the switch.there should no need of coding or software to install in mobile.

thanks to giving your precious time.

Hello Sir, can you please provide me with a program code for interfacing ATmega328 with bluegiga wt32.

Only you can help me Sir.

Thanks alot.

hello sir,

sir i am doing a project in which i have to send ecg signals from microcontroller to the android phone via a bluetooth module.

the values are sent to phone then it compares with the prestored values and sends a message with the exact location of the person to the prestored numbers eg of the doctor or any close relative incase ther is a abnormality.

but i dont know android programming and how to go about it exactly.

Can you help me out sir.

thanks

iam waiting for your reply. i think you are not interseted in my work.

sir i am student of 1st semester of electrical engineering.i will b thankfull to you if you help me.

@techspark: this is not a trivial application. You should not get into tasks that exceed your knowledge or be prepared for a new learning effort. There is no easy way arround this. Good luck

@umair: I don’t understand what you are trying to do

@affandi: do you still need help? What is the status of your project?

connect bluetooth module and electie lock(with relay) with microprocessor to unlock this electric lock with bluetooth.user should be able to use any cellphone(ios,andriod or classic).

in cellphone when user search for bluetooth device, this project should be visible and when user connect it, with paircode, it should send commad to microprocessor to unlock the lock.there should not be any software to install in cellphone.simple go to bluetooth and search for it(if necessory user send a file(any pic or song which surely will b rejected by it but it will active it) to give commad to microprocessor)

i need coding which will b used in microprocessor for its working

can you help me

thanks

I am about to do some robot thing for my design project I’ve been into a lot of research until I found this. I think I can get much from this. Hope you’ll keep in touch for I’m sure I’ll be having lots of questions. Thank you:)

hello sir, where do i can get the electronic device@component that install in that car??is it cheaper?

@umair: in case you have specific programming questions I can help you, but I don’t have the time to do the work for you.

@Marycris: sure, feel free to ask. Maybe I can help.

@eddie: you’ll need to start with an atmega8 microcontroller. I would say it has a good price.

sir,can the car controller control by laptop?? but im not good enough in this stuff..coz i want to make it for my final project..im out of idea..help me sir..

@eddie: for your final project I suggest you do something you are good at. It doesn’t make much sense copying stuff if you don’t understand it.

I’ve been trying to install your program on an Samsung Galaxy S3, HTC Evo, and a Nexus 7 tablet but every time I install I get a “Unfortunately, PerseusAndroid has stopped.” message. The link I’m using is http://www.pocketmagic.net/wp-content/uploads/2010/11/android-control-robot-via-bluetooth1.zip , I’ve tried taking the apk file out of bin, and running the code through AIDE with the same result. Fiddling around with the code, the error seems to happen on this line : tabHost.addTab(ts); in the createMainTABHost() method in PerseusAndroid.java. I’m not sure if maybe I have an older version of code?

@luca: android has changed since I wrote that code. You need to make this change: TabHost tabHost = new TabHost(this, null);

instead of TabHost tabHost = new TabHost(this);

That did the trick! Thank you for the quick reply.

Glad to hear it works. what are you building?

This is part of an old robot similar to yours that already used to work. My professor wants me to get it working again so that I can learn a bit more about communicating between Android and Arduino.

Sounds good. Would love to see yours. Drop me a few pics if you have the time.

BTW, check out this project as well : http://www.pocketmagic.net/2012/12/diyhomemade-portable-radiation-dosimeter/ (it shows a connection from a uC to an Android device as well)

Thanks for all the help!

sir, please help me I also want to make a blutooth controlled car, to control it from my Samsung galaxy y android phone having android 2.3 version.

what are the kits or instruments required please help me step by step.

i need ur help i alimented a Hc05 bluetooth when i searched for his adress mac i can t find it !! i shoud activate/make it descovrble or what !!i don t understand my problem

@dhia: When you power on your HC05 it should be discoverable by default. Make sure you’ve connected it correctly, including the 10K resistor.

Hello!

I am trying to use your application on an android 2.3.3 and it gives me an error when i try to search for devices “Descovery bluetooth devices : res -1”. Also could you please tell me how i might use hci_read_rssi? i dont understand the parameters it receives.

Thanks!

Salut Victor,

O sa incerc sa-ti trimit softul pentur android folosit aici: http://www.pocketmagic.net/2013/02/android-controlled-rower-robot-part-2/

Asta dupa ce il finalizez.

Ce incerci sa faci?

As vrea sa fac o aplicatie de triangulare folosind rssi-ul mai multor telefoane si in sdk nu pot decat cand le caut…asta inseamna o data la 12 secunde si oricum chiar daca pot opri cautarea telefoanele raspund foarte greu…get si dupa 6 secunde si nu e util..

Si citisem ca libraria de bluez ar fi ascunsa in ndk…am vazut ca tu ai luat de pe google sources fiserele alea. Oricum eu nu am facut in liceu programare iar in facultate am facut 90% din timp java deci c,c++ nu imi sunt foarte cunoscute motiv pentru care nici nu inteleg cum sa chem hci_read_rssi();

Mentionez ca aplicatia este pentru licenta.

In legatura cu eroarea de pe telefonul cu 2.3.3 cum as putea sa o rezolv?

Ms anticipat!

Ma poti ajuta si pe mine cu eroarea aia? Si eventual imi poti spune cum sa apelez metoda de rssi?

Ms frumos!

will this project still work if you used a PIC with UART interface instead of a ATMega?

Yes, but you’ll need the software for the pic. See this one too; http://www.pocketmagic.net/2013/02/android-controlled-robot-part-2/

PLZ HELP ;

i want to do like this program but not using bluetooth but using wifi

I will discuss the graduation project on 10/06/2013

plz help

hello Sir…….if we design the same using Atmega16 then what will be the programe code for Atmega16 mcu??

@Kumar: The same, but compiled for the mega16.

Hi,

How can to implement this code for control remote access between two android devices. I want to make android to android connection through bluetooth and remote control from one device to another device. Kindly re-engineer your code and post it soon.

Hello Radu,.

.

Can u please make a tutorial explaining the concept of UART of ATmega8, I couldn’t find much information about it elsewhere. I want to learn what is UART and how it work with ATMega8 and its coding and all those things that you think a beginner should know.

Thanks

Shrinath

hello.,

I’m using my bluetooth headset., but here I’ve trouble regarding Rx & Tx pin., in my bluetooth there are six pin SPK+ SPK- B+ B- 5V GND., now how to find that perticular pins..?

How to import the android source code on android studio linux version ?

Can u please post the link to the part 2 of this article. The current link keeps redirecting to this same part 1. Thanks in advance!