OpenMAX sometimes abbreviated as “OMX” is a royalty free, cross platform set of routines and interfaces targeting multimedia processing. Intended mostly for embedded devices, it was designed keeping efficiency in mind, so that large amounts of multimedia data can be processed in an optimised and controlled fashion, this includes video/audio codecs, graphics and so on.

OpenMAX provides three layers of interfaces: application layer (AL), integration layer (IL) and development layer (DL). OpenMAX IL is the interface between media framework, such as StageFright or MediaCodec API on Android. More on this here.

Introduced with Android 16, the MediaCodec API can be used to access low-level media codec, i.e. encoder/decoder components, part of the Android OS. This is convenient for at least three reasons: (1) we get access to highly optimised, hardware accelerated codec routines, (2) we avoid licensing complications (eg. AAC requires patent license for all manufacturers or developers of AAC codecs, fees avoidable by using the built-in AAC codecs via the MediaCodec Class) or (3) replacing traditional computational hotspots within standardized media codecs (instead of integrating codecs per your app, simply use built-in, standardised versions).

Android Built in codecs

|

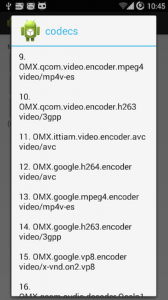

What types and how many codecs are we talking about? The short answer is “plenty” for most Android devices currently on the market.

This short code will iterate though the built in codecs. I wrote a simple app to list the codecs installed on my phone. The image shows part of the list.

int numCodecs = MediaCodecList.getCodecCount();

for (int i = 0; i < numCodecs; i++) {

MediaCodecInfo codecInfo = MediaCodecList.getCodecInfoAt(i);

String[] types = codecInfo.getSupportedTypes();

}

Here's the output generated by a few devices I've tested this on: |

Decoding with MediaCodec

Given the large variety of built in codecs, the great thing about the new API is that it allows you to feed an input buffer and get the results in an output buffer in just a few steps. By doing so, you get fast encoding / decoding depending on the needs. You can't get any better performance than this, considering the OMX does some of the work using dedicated hardware and that means enhanced speed.

For the purpose of illustrating the concept and to give some sample code to get you started, let's say we need to build a media player for AAC and MP3 audio files, but we want to use the (free) and (fast) embedded decoders. But the code presented here will work with as many format supported by your mobile device. On the one I currently use, I was able to play Vorbis and WMA hassle free, out of the box. As presented above, we can do all that using the MediaCodec API.

The starting point is the SDK page discussing the MediaCodec. Besides this, there are several classes we need to interact with: the MediaExtractor, used to read bytes from our encoded data (be it online streams, local files or embedded resources). There's the MediaFormat, used when reading the type of encoded files and all the details related to the content itself. Remember, the purpose of this article is to demonstrate building an AudioPlayer, but the API provided with Android 16 (or newer) can also be used for making a video player, by following mostly the same steps , and adding a surface view to show the frames. Finally, having the audio decoded to PCM, we'll want to use the AudioTrack api to get the actual sound coming out of the speakers.

The architecture of an Audio Player

There are several ways to implement an audio player, but ultimately we'll want to have a player class, accepting the audio data source, a few commands like start, stop, pause, and providing events via an interface or a handler, to inform us on the progress. The playback can be done synchronously on the same thread that called the player object, or on a separate thread. Usually the latter is the way to go, but this depends on your particular application.

For convenience I've designed the software as an Android Library project, that you can easily include in your own work. The library is named OpenMXPlayer. To get closer to the actual details of my implementation, here is a functional diagram:

Thanks to the interface exposed by MediaExtractor, we can feed a large variety of content to the player. Be that online podcasts, live streams or local files. Even embedded resources (in res/raw or in assets) will work just fine. What we have here is a fast, optimised, highly versatile player that can handle popular audio files such as Mp3, WMA, Vorbis, AAC or more.

The client can hook up an interface, and get notifications:

public interface PlayerEvents {

public void onStart(String mime, int sampleRate,int channels, long duration);

public void onPlay();

public void onPlayUpdate(int percent, long currentms, long totalms);

public void onStop();

public void onError();

}

You can get advanced audio content with just a few lines of code. It gets as simple as:

OpenMXPlayer player = new OpenMXPlayer();

player.setDataSource("/mnt/sdcard/Metallica - Nothing else matters.mp3");

player.play();

Setting the listener is optional, but you'll want that to be notified of progress and events. Instead of the local mp3 you can easily put an URL address of a radio station or any other online audio resource.

A video demo

To illustrate the way the player works, I've recorded a short video demo with the OpenMXPlayer in action. The test activity features three buttons that load local embedded resources (a mp3 file, an AAC and a WMA placed in the project's resources folder) an edit text to define a custom audio resource and the Play, Pause, Stop buttons that we find on any player:

Getting the code

This software library is available as open source, licensed under the LGPL license. To understand what you can and what you can't do with this code, have a look here. The code itself is available here , and on Github. The code comes with a demo activity that implements most of the logic provided in the library. Use it as a starting point. For any questions, feel free to contact me by mail, or using the comments section below.

Hi,

If I want to limit the DB of an audio string should it be done by the codec?

Hi!

What do you mean by the “DB of an audio string” ?

looks great!

I hate to ask this kind of questions, but oh well… Is it possible to somehow play gaplessly with your library like with .setNextMediaPlayer(…) on Android?

Its very nice but is it support tempo/speed change of mp3?

Anybody managed to make this project working with a online live stream ? Not able to stream any live stream here…

Hey toto, it should work just fine. Try various sources, your first test stream might have issues.

hi,thats good

but if the audio data not in a local file,maybe in a buffer like this byte[] Audidata,and what should i do now?

“setDataSource()”can’t input a array, i face the problem now…….

Hi,

I’ve been playing with OpenMXPlayer to replace MediaPlayer in my streaming application because of the buffering issues with MediaPlayer.

I’m having issues playing AAC+ streams. MediaPlayer and ExoPlayer handle these streams just fine but OpenMXPlayer plays them back at half speed because MediaExtractor detects the AAC format incorrectly with half of the sample rate. It also takes MediaExtractor a while to determine the format of the stream unlike with MP3.

Here are sample streams which exhibit this behavior:

http://pub8.di.fm:80/di_vocaltrance_aacplus

http://pub8.di.fm:80/di_vocaltrance_aac

The following MP3 stream starts playing immediately:

http://pub8.di.fm:80/di_vocaltrance

I was able to fix the half-speed playing problem by doing the following on the format object:

format.setInteger(MediaFormat.KEY_SAMPLE_RATE, 44100);

format.setInteger(MediaFormat.KEY_AAC_PROFILE,MediaCodecInfo.CodecProfileLevel.AACObjectHE);

Do you have any idea how to get MediaExtractor to properly detect the AAC format and make it as quick as an MP3 based stream?

Hello Jeff,

I started with this library for solving the delay with playing MP3 files, somewhat similar to your issue with AAC.

I have no clue on why the aac gets delayed, you will need to take it further and check the MediaExtractor source code in regards to AAC behaviour, unless someone else here has any input.

Radu

Hi, I am a new comer to the Android and am quite confused between StageFright, MediaCodec API and OpenMAX, what’s the relationship between them? Does the MediaCodec only support hardware video decoder? If I want to add my own software decoder, could I still use MediaCodec API to call my decoder or I need to use OpenMAX? By the way, what’s the process behind queueInputBuffer? Is it possible to follow the sourcecode till it find the software decoder to decode the data?

Thanks a lot.

Simon