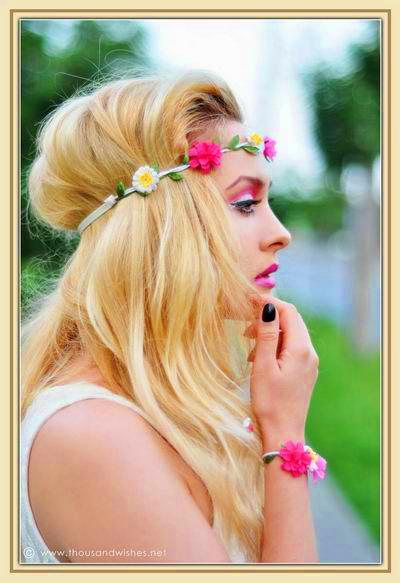

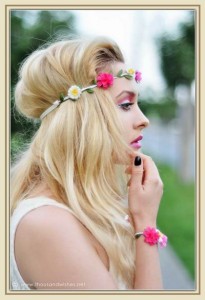

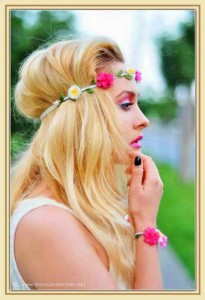

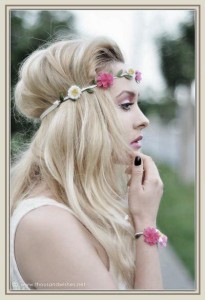

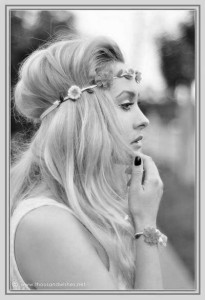

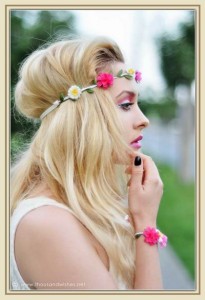

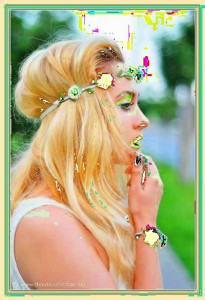

Increasing the saturation in an image is equivalent to increasing the “amount of color”, while a completely desaturated image would be a grayscale image. See the images below:

Normal |

Saturated |

Desaturated |

Grayscale |

Logic and Implementation Algorithm

The images are composed of pixels. For a RGB24 image, each pixel is a set of 3 bytes, 1 for each of the three color channels: red, green, blue. 1 byte (or 8 bits) per channels, means a total of 2^8 = 256 colors, so we would have a maximum of 256 colors of red, 256 of green and 256 of blue. RGB24 allows a maximum of 256x256x256 = 2^24 = 16777216 colors. All the pixels in a given RGB24 image, can take one of these values. This results in a good color-resolution representation. RGB16 only allows 65536 colors, and the quality is significantly reduced, but still acceptable (5bits for RED, 6bits for GREEN and 5bits for BLUE, green gets more given the Human eye increased sensibility to the green colors).

To increase the saturation in a given image, we would need to take all pixels, and enhance their color levels. From the RGB representation, we compute the HSL (Hue , Saturation, Luminance), another type of color representation. We increase the Saturation by a given factor (decrease is also possible here), and then convert back to RGB and save the modified image.

You can read more on color spaces here.

Converting from RGB to HSL

void convertRGBToHSL(l_int32 rval,l_int32 gval,l_int32 bval,

l_int32 *hval, l_int32 *sval, l_int32 *lval) {

l_float32 r, g, b, h, s, l; //this function works with floats between 0 and 1

r = rval / 255.0;

g = gval / 255.0;

b = bval / 255.0;

//Then, minColor and maxColor are defined. Mincolor is the value of the color component with

// the smallest value, while maxColor is the value of the color component with the largest value.

// These two variables are needed because the Lightness is defined as (minColor + maxColor) / 2.

float maxColor = MAX(r, MAX(g, b));

float minColor = MIN(r, MIN(g, b));

//If minColor equals maxColor, we know that R=G=B and thus the color is a shade of gray.

// This is a trivial case, hue can be set to anything, saturation has to be set to 0 because

// only then it's a shade of gray, and lightness is set to R=G=B, the shade of the gray.

//R == G == B, so it's a shade of gray

if((r == g)&&(g == b)) {

h = 0.0; //it doesn't matter what value it has

s = 0.0;

l = r; //doesn't matter if you pick r, g, or b

}

// If minColor is not equal to maxColor, we have a real color instead of a shade of gray,

// so more calculations are needed:

// Lightness (l) is now set to it's definition of (minColor + maxColor)/2.

// Saturation (s) is then calculated with a different formula depending if light is in the first

// half of the second half. This is because the HSL model can be represented as a double cone, the

// first cone has a black tip and corresponds to the first half of lightness values, the second cone

// has a white tip and contains the second half of lightness values.

// Hue (h) is calculated with a different formula depending on which of the 3 color components is

// the dominating one, and then normalized to a number between 0 and 1.

else {

l_float32 d = maxColor - minColor;

l = (minColor + maxColor) / 2;

if(l < 0.5) s = d / (maxColor + minColor);

else s = d / (2.0 - maxColor - minColor);

if(r == maxColor) h = (g - b) / (maxColor - minColor);

else if(g == maxColor) h = 2.0 + (b - r) / (maxColor - minColor);

else h = 4.0 + (r - g) / (maxColor - minColor);

h /= 6; //to bring it to a number between 0 and 1

if(h < 0) h ++;

}

//Finally, H, S and L are calculated out of h,s and l as integers between 0..360 / 0 and 255 and

// "returned" as the result. Returned, because H, S and L were passed by reference to the function.

*hval = int(h * 360.0);

*sval = int(s * 255.0);

*lval = int(l * 255.0);

}

Converting from HSL to RGB

void convertHSLToRGB(l_int32 hval, l_int32 sval, l_int32 lval,

l_int32 *rval, l_int32 *gval, l_int32 *bval) {

float r, g, b, h, s, l; //this function works with floats between 0 and 1

float temp1, temp2, tempr, tempg, tempb;

h = (hval % 260) / 360.0;

s = sval / 256.0;

l = lval / 256.0;

//Then follows a trivial case: if the saturation is 0, the color will be a grayscale color,

// and the calculation is then very simple: r, g and b are all set to the lightness.

//If saturation is 0, the color is a shade of gray

if(s == 0){

r = l;

g = l;

b = l;

}

//If the saturation is higher than 0, more calculations are needed again. red, green and blue

// are calculated with the formulas defined in the code.

//If saturation > 0, more complex calculations are needed

else {

//Set the temporary values

if(l < 0.5) temp2 = l * (1 + s);

else

temp2 = (l + s) - (l * s);

temp1 = 2 * l - temp2;

tempr = h + 1.0 / 3.0;

if(tempr > 1) tempr--;

tempg = h;

tempb = h - 1.0 / 3.0;

if(tempb < 0) tempb++;

//Red

if(tempr < 1.0 / 6.0) r = temp1 + (temp2 - temp1) * 6.0 * tempr;

else if(tempr < 0.5) r = temp2;

else if(tempr < 2.0 / 3.0) r = temp1 + (temp2 - temp1) * ((2.0 / 3.0) - tempr) * 6.0;

else r = temp1;

//Green

if(tempg < 1.0 / 6.0) g = temp1 + (temp2 - temp1) * 6.0 * tempg;

else if(tempg < 0.5) g = temp2;

else if(tempg < 2.0 / 3.0) g = temp1 + (temp2 - temp1) * ((2.0 / 3.0) - tempg) * 6.0;

else g = temp1;

//Blue

if(tempb < 1.0 / 6.0) b = temp1 + (temp2 - temp1) * 6.0 * tempb;

else if(tempb < 0.5) b = temp2;

else if(tempb < 2.0 / 3.0) b = temp1 + (temp2 - temp1) * ((2.0 / 3.0) - tempb) * 6.0;

else b = temp1;

}

//And finally, the results are returned as integers between 0 and 255.

*rval = int(r * 255.0);

*gval = int(g * 255.0);

*bval = int(b * 255.0);

}

As said above, to increase the saturation we should use:

l_int32 h, s, l;

convertRGBToHSL(rval, gval, bval, &h, &s, &l);

s = s * 2;

convertHSLToRGB(h,s,l, &rval, &gval, &bval);

But what happens to pixels that already have high levels of saturation? They would go off-scale and compromise the image. The s = s* 2 transformation code has the following effect:

Normal |

Algorithm issues |

Defect analysis

The defects illustrated above, have two causes:

1. some pixels are already highly colors, with a saturation level close to the maximum - increasing it even further would clip the saturation curves, and distort the image

2. some pixels are close to grayscale colors, saturating those makes little sense, but doing so would result in artifacts / defects

Proposed solution

Assuming our saturation modification factor is "fact". The transformation should be: newsat = sat + fact;

Problem 1: For increasing saturation, we need to check the remaining saturation interval space, and make sure our new value will not be greater.

According to the conversion algorithms illustrated above, the saturation maximum value is set to 255. If a pixel's saturation is "Ps", it can be further saturated by a maximum value of 255-Ps (Ex. for 210, we can go for 45 max). This translates as newsat = sat + (255 - sat) * fact; Fact in this case can go from 0 to 1, float variable.

For decreasing the saturation, for a pixel of saturation "Ps", we can't decrease the saturation more than "Ps" (Ex. for 20, we can only desaturate by 20 maximum). This becomes: newsat = sat + sat * fact; again , fact is a -1 .. 0 float number, but remember - it is negative, so the resulting saturation will therefore be reduce.

Problem 2: The grayscale colors have a low saturation value. We don't want to saturate these, so a new compensation factor must be used: gray_factor = sat / 255.0 , as you can see for highly saturated colors this tends to 1, and for grayscale it goes down to 0. We only need it when increasing the saturation.

The final algorithm becomes:

void pixSat(PIX *pixs, l_float32 fract) {

// normalize parameters

if (fract < -1) fract = -1;

else if (fract > 1) fract = 1;

l_uint32 *datas, *lines;

l_int32 i, j, bx, by, bw, bh, w, h, wpls;

l_int32 rval, gval, bval;

if (!pixs || pixGetDepth(pixs) != 32)

return; //not 32bpp

pixGetDimensions(pixs, &w, &h, NULL);

datas = pixGetData(pixs);

wpls = pixGetWpl(pixs);

for (i = 0; i < h; i++) {

lines = datas + i * wpls;

for (j = 0; j < w; j++) {

extractRGBValues(lines[j], &rval, &gval, &bval);

l_int32 h, s, l;

convertRGBToHSL(rval, gval, bval, &h, &s, &l);

if (fract >= 0) {

// we don't want to saturate unsaturated colors -> we get only defects

// for unsaturared colors this tends to 0

float gray_factor = (float)s / 255.0;

// how far can we go?

// if we increase saturation, we have "255-s" space left

float var_interval = 255 - s;

// compute the new saturation

s = s + fract * var_interval * gray_factor;

} else {

// how far can we go?

// for decrease we have "s" left

float var_interval = s;

s = s + fract * var_interval ;

}

convertHSLToRGB(h,s,l, &rval, &gval, &bval);

composeRGBPixel(rval, gval, bval, lines + j);

}

}

}

As you can see this has been used in conjunction with Leptonica. The result of this code properly saturates or desaturates the input images, as shown in the images at the beginning of this article.

You can download the binary and test it here:

TestSaturation

You might also need liblept168.dll placed in the same folder for the .exe to work.